It’s the itch you feel to check your phone and the relief you experience once you do

We all know what it feels like to be tethered to technology these days. It’s not just our kids — it’s the rest of us as well. Probably one of the biggest hooks is the smartphone. As of 2016, there were about 2.1 billion smartphones worldwide. Considering there are about 7.6 billion people on the planet, that’s a LOT of smartphones! Then there’s social media. Facebook alone has over 2 billion users. Many people worry about smartphone addiction or screen addiction. We all know how we can get sucked into compulsively checking our smartphones, especially to text or use social media. In previous blogs, I discuss how we are drawn to screens and how they meet our needs. I blogged about how both classical conditioning and supernormal stimuli can compel us to check our screens. However, there’s another mechanism through which we get sucked into our devices. Variable reinforcement and screens is that third mechanism and the topic of this blog.

Technology Isn’t Necessarily Bad

As I’ve said before, technologies such as smartphones, video games, and social media aren’t inherently bad. They provide countless benefits. If they didn’t, we wouldn’t use them! We like to use various technologies because they can meet our psychological needs for relatedness, autonomy, and competence. In a sense, the many practical, entertainment, and social reasons for using smartphones ultimately involve getting our psychological needs met. However, a curious thing happens with our technologies. We start to use them in a compulsive way that often starts to resemble an addiction.

Smartphone Addiction

The way we feel about our phones is beginning to look like the way a smoker jones for a cigarette. We just HAVE to check our smartphones. We begin to check our phones so frequently that it interferes with our relationships, productivity, and our safety. Why would do we keep checking our smartphones so compulsively? Why is it so hard to disengage from them and leave them in our purses, pockets, or another room? One particular mechanism appears to be involved and can explain this powerful hook: the variable reinforcement schedule.

What Are Reinforcement Schedules?

If you ever took an introductory psychology course, chances are you’ve heard of B.F. Skinner. He was a psychologist and behaviorist who looked at how behavioral responses were established and strengthened by different schedules of reinforcement. For instance, a rat in a cage that is taught to press a lever to earn a food pellet (reward) might be taught that it gets one food pellet for every 3 presses of the lever. This would be an example of a fixed interval reinforcement schedule.

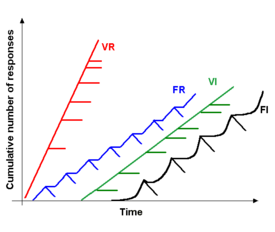

Although there are a variety of types and subtypes of reinforcement schedules that can affect the likelihood of different behavioral responses, I’m going to briefly discuss the variable ratio reinforcement schedule.

A variable ratio reinforcement schedule occurs when, after X number of actions, a certain reward is achieved. Using the rat example, the rat doesn’t know how many presses of the lever produces the food pellet. Sometimes it is 1, others it is 5, or 15…it never knows. It soon learns that the faster it pushes the lever though, the sooner it will receive the pellet. Researchers have found that variable ratio schedules tend to result in a high rate of responses (refer to the VR line in the graph above). Also, variable ratios are extremely resistant to extinction. In the case of the rat, if the researchers stops giving pellets of food after the lever is pressed, the rat will push the lever frequently for a very long time until it finally gives up (which is the extinction part). Slot machines are a real world example of a variable ratio.

Variable Reinforcement in Our Daily Lives

It turns out, variable ratio reinforcement schedules are involved in many behavioral addictions, such as gambling. Yes, that’s right. In a sense, compulsively checking our phones is much like compulsive gambling. In fact, many “obsessions” and hobbies also involve this variable ratio reinforcement schedule, such as:

Fishing

Hunting

Basically any type of collecting (e.g., collecting Pokemon cards, stamps)

Looking for bargains while shopping

Channel or Internet surfing

Why Are Variable Reinforcement Schedules Powerful?

Variable reinforcement schedules are NOT bad. It is a way that we learn. However, they can be very powerful. We learn casual relationships from “connecting the dots.” From an evolutionary perspective, learning causal connections enhances our chances of survival. For instance, if I do “Action A” then “B” is the likely result. When there is a variable relationship, that means when we perform “Action A” then “B” might be the result. The reward system in the brain releases dopamine in fairly large amounts in variable situations to motivate the organism to pay attention so that it might learn the causal connection. In essence, the brain is trying to “crack the code.” When the relationship between two stimuli is variable, then the reward center of the brain keeps releasing dopamine so that we can try to figure out the connection.

Variable Reinforcement and Technology

It is easy to see how technologies such as social media, texting, and gaming work on a variable reinforcement schedule. We never know what to expect. Who posted to Facebook? What did they post? Who commented on my post? What did they say? My cell is buzzing – what could this be about? Did Trump tweeted something crazy today?

The moment our smartphones buzz or chime, this reward system is activated. Importantly, it is the anticipation phase that is key to the activation of this reward system. We just HAVE to find out this information, whatever it is. It feels like an itch that needs to be scratched or a thirst that needs to be quenched. Variable reinforcement and screens form a powerful combination. I my next blog, I’ll discuss the brain and tech addiction in a bit more detail.